Turn to Tara: 'Scary new spin on a very old scam' - Scammers clone voices with help of AI for phony kidnapping threats

Consumer advocates are sounding the alarm about a growing scam involving artificial intelligence that has already left a trail of terrified parents across the country.

More Stories

Imagine getting a phone call from your child, who is uncontrollably sobbing, and then a man’s voice demanding ransom.

Consumer advocates are sounding the alarm about a growing scam involving artificial intelligence that has already left a trail of terrified parents across the country.

News 12’s Tara Rosenblum talked to one parent about the scam.

Jennifer DeStefano describes it as the most terrifying four minutes of her life.

“This man gets on the phone. He goes, ‘Listen here, I have your daughter. If you call anybody, if you reach out to anybody, I'm going to pump her stomach full of drugs and have my way with her, and I'm going to drop her in Mexico, and you're never going to see your daughter again’,” DeStefano says.

The faceless kidnapper then told her the only way to avoid the unthinkable harm to her 15-year-old child was a $1 million ransom.

“At that point, I just started to panic,” she says.

The Arizona mom called 911 and then her husband – only to discover her daughter was safe with him.

“I didn't believe that she was really with my husband. I was sure that it was her voice. There was no doubt that it wasn’t her voice. I never questioned that it was not her voice,” DeStefano says.

Only, it wasn’t her. It was a clone created by scammers using artificial intelligence. A fast-growing technology – increasingly falling in the wrong hands.

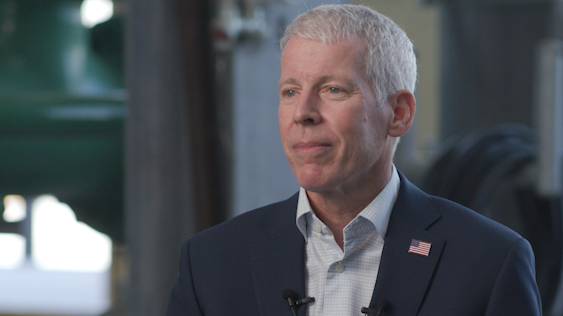

Brian Rauer, of the Better Business Bureau, doesn’t know exactly how many families across the tri-state have fallen prey, but he says it’s only a matter of time before the list of local victims grows. He wants all parents to be on the lookout for AI voice cloning scams.

He says that as time goes by, AI gets better and easier to use.

Rauer says most scammers find their victims through social media and then demand their parents pay up large sums via gift cards, cryptocurrency or wire transfers. Others disguise themselves as legit business or government agencies.

“This is a kind of a scary new spin on a very old scam. You know, conversational AI creates these believable phishing scams that they expertly mimic legitimate organizations, banks, government agencies, social media companies. So, the scammer sends these unsolicited messages to potential victims, you know through email messaging apps, then it prompts these links for personal financial information, and they seem far more legitimate because these are compelling messages. They're modeled after the actual content from the bank, the government agency or organizations,” Rauer says.

Rauer advises people to scrutinize the text for red flags.

“AI-generated text can often use the same words repeatedly. And they can use short sentences in unimaginative language, and they can lack idioms or contractions. It can be very implausible statements. So, if the language raises your suspicion, walk away and independently verify the person with whom you're chatting,” he says.

DeStefano is sharing her story in hopes that more people can be informed.

“It was definitely horrific. It's not anything you ever want to hear or experience in that capacity, especially as a mother. It's about awareness. I had no idea that this was going on. I had no idea that AI had this ability or technology. Had I known, the situation would have been handled much differently,” she says.

Got a problem? You should Turn to Tara. Here’s how.